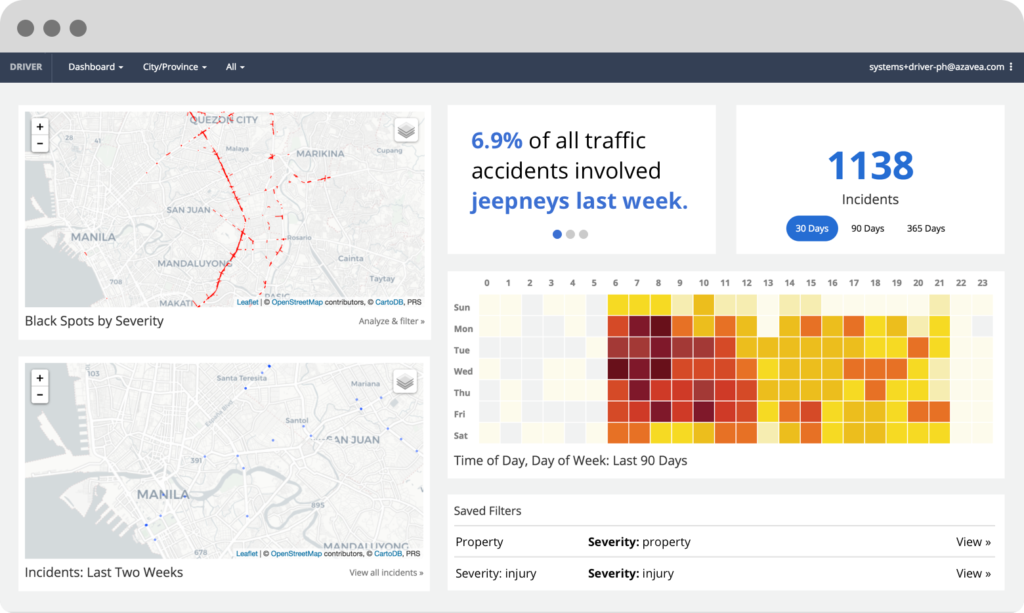

Project selection at Azavea

Over the past few months, several technology firms have come under increasing scrutiny by their own employees regarding the types of projects they take on. Google has received a great deal of attention concerning its work on Project Maven for the DoD as well as the development of a search engine that would be acceptable to Chinese government authorities. Amazon employees have expressed concern about use of its facial recognition algorithms for law enforcement agencies. Microsoft employees have called for the end to its contracts with ICE. And Salesforce employees have petitioned to end its relationships with some federal agencies. Strikingly, these disputes are being carried out in public.

At Azavea, we have had conversations (and disagreements) about which projects we should pursue for more than fifteen years. We are a mission-driven company, and we take pride in being selective about the work we take on, and most of my colleagues value this selectivity a great deal. But I began fielding more of these types of questions about three years ago, both in my monthly office hours and in interviews with prospective colleagues. I think some of this was driven by the fact that we were growing rapidly, and there were more new customers, but I have come to believe that it also reflects a cultural change; workers are more willing to overtly question the nature of their work and the degree to which it reflects their personal values, and I believe this is a positive shift.

After yet another discussion regarding some prospective R&D work and how we’d handled a similar decision in the past, I set out to write down a set of guidelines for selecting projects. The result is a lengthy document, the Azavea Project Selection Guidelines, that I shared internally about a year ago. The recent stream of stories about other tech firms has caused me to think it would be worth sharing a bit about how we make these decisions at Azavea. This post outlines our mission, the project selection criteria (the questions we ask ourselves), and a list of principles that we consider when choosing projects to take on at Azavea.

Mission-oriented work

Azavea’s mission is:

Advance the state of the art in geospatial technology and apply it for civic, social, and environmental impact.

Azavea creates software and data analytics for a broad range of customers. To stay true to our mission we attempt to make deliberate, considered decisions about which professional services projects we take on and what types of products we develop.

Our mission is rooted in the idea that technology can have a positive impact on individuals, our society, and the planet. It’s rarely easy or simple to understand how a given project will impact the world. The best we can do is to consider the likely effects of our work, discuss it, and make decisions that attempt to balance competing concerns. These decisions will rarely make everyone happy or align with every individual’s values, but we believe it’s important to provide an opportunity for discussion as well as articulate the reasoning behind specific decisions, particularly when a given decision may be complex or embody multiple considerations.

Questions we ask ourselves

We consider a number of criteria when we decide whether or not to take on a project. We want projects that will be successful, have the potential for positive impact, can be profitable, are technically challenging, and advance our strategic interests. Many projects will not have all of these attributes but we want to hit as many as we can. Our best projects take into consideration the following questions:

- Client/Customer

- Does the client/customer have a clear understanding of what they want?

- Are they someone we want to work with, someone we trust, and someone we can build a relationship with?

- Technology

- Does the project involve working with geospatial data?

- Do we have the technical skills, expertise, and capacity to take on the project?

- Will the project enhance one or more of our open source projects or other research?

- Will the effort advance the state of the art in geospatial technology?

- Will we develop new skills that will help us in the future?

- Is the customer able to maintain the technology in the future?

- Impact – Will the project have a positive civic, social, and/or environmental impact without compromising our values?

- People – Are our colleagues excited to work on the project, do they have the capacity to do so, and will they be challenged by the work?

- Networks and Relationships – Will working on this project develop a beneficial partnership or new client relationship?

- Financial Risk – Will this work be profitable? If not, is the financial risk worth it from a strategic perspective?

- Reputation – Will we be proud of this work? Does completing this project enhance our reputation or serve as a demonstration of our commitment to positive impact?

Principles

One of our values is to create more value than we capture, and there may be many paths to doing that. The Guidelines we put into place are intended to help serve as a set of thinking and discussion tools as well as a common vocabulary for carrying out such a discussion.

We want to be on the right side of history

Martin Luther King (and Theodore Parker before him) famously said “The arc of the moral universe is long, but it bends toward justice.”. We should consider the long-term impacts of what we do, and there will be situations in which the short-term impact may be undesirable but we have an opportunity to have a long-term positive impact.

Open knowledge systems are usually better than closed

Another of our values is to “default to transparency”. We use, contribute to, and support open source, open data, open algorithms, and open science. We have an opportunity to contribute to shared knowledge that can be used by others and we take that opportunity wherever we can.

Have the courage to take on difficult topics

How should we live our lives? What should I do today? These are the core questions of ethics and philosophy. They rarely have easy, simple answers, but we need to have the courage to ask them.

We don’t renege on commitments in the middle of a contract

Sometimes we decide to take on a project, and we realize later that we made a mistake. Walking away mid-stream is rarely the honorable decision (though there may be rare situations in which this is warranted). We finish the work, we share the mistake within the company, we learn from the experience, and then we work toward extricating ourselves and determine how to avoid similar situations in the future.

Democracy is the worst system… except for all of the rest

We live in a society structured around representative democracy, civil society, and rule of law. Our work can support that or operate in opposition to it. When we have a choice, we want to respect and support that system.

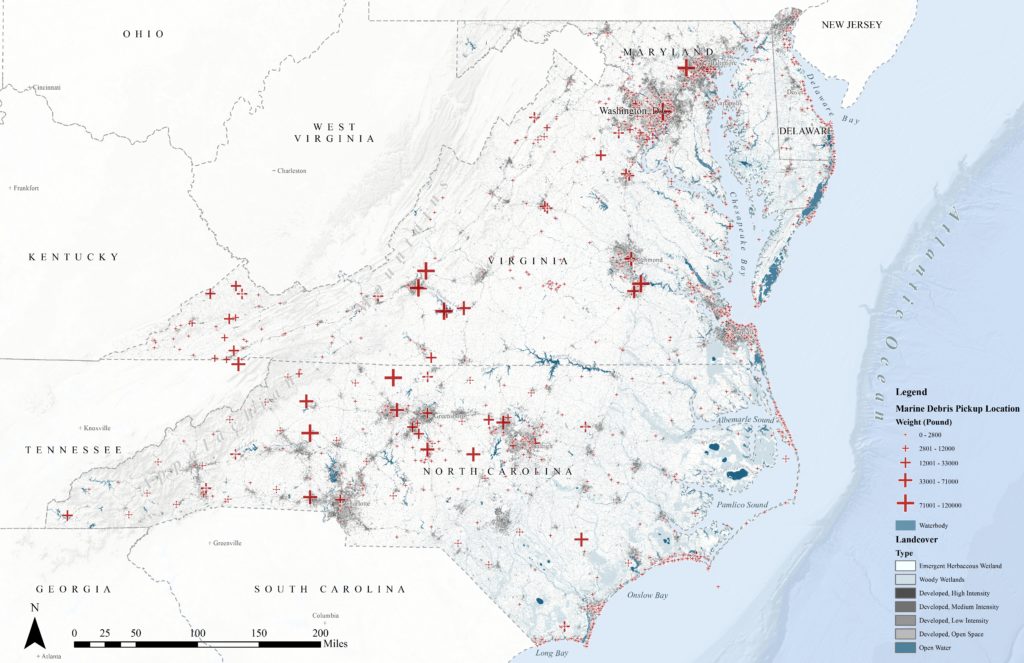

We only have one planet

This beautiful planet has finite resources, and humanity’s overall management of those resources has not generally been exemplary. This must change. When our work can contribute to reducing waste, reducing fossil fuel consumption, cleaning our environment, maintaining or growing species diversity, and other improvements to our dwelling on the planet, we should enthusiastically embrace them. And where there is a high likelihood that our work will damage the environment, we should attempt to reduce that harm or avoid the work altogether.

When there is uncertainty, we discuss the issues

A group of people our size cannot all share the same values, and we will not always agree on which projects and customers we should take on. When there is disagreement or uncertainty, there should be an opportunity to discuss it. This does not necessarily mean that the decision will be democratic, but it does mean that there should be an opportunity to discuss the questions and dispute the assumptions.

A team needs to own the project

Even if the executive team believes the project is worth taking on, if the team that will develop the software or data analytics is dead-set against it, the project won’t be successful, and we need to find a way to honor that opposition. This doesn’t mean everyone has a veto, but it does mean that consulting with the team is a key activity.

Above all, do no harm

We aim to work on projects that have the potential for positive impact, but it’s often difficult to know which projects will benefit others. And sometimes we work on projects that have much less impact than we originally hoped for. Further, we may take on some projects that are purely technical and it’s unclear how they will be applied. But, at the very least, we want to avoid projects that will clearly cause harm to individuals, communities, or society.

We do not work on weapons systems, warfighting, or activities that will violate privacy, human rights, or civil liberties

Azavea is not opposed to working with agencies engaged in defense and intelligence. However, when we engage with defense and intelligence agencies, we are careful to be clear about the purpose of the work and describe clear boundaries. There are several types of applications that we will not pursue including: weapons systems, warfighting, or activities that will violate privacy, human rights, or civil liberties. Further, we will not take on projects that involve working with classified data or require security clearances, and we will warn any job applicant the has a security clearance that we will not support maintenance of the clearance.

We do not work to support expansion of fossil fuel extraction

We will not pursue projects that directly support oil and gas extraction, expansion of extraction, or policy advocacy that promotes expanded fossil fuel extraction. We believe in the urgent need to take action to reduce carbon emissions and stave off catastrophic climate change. That said, like defense and intelligence, Azavea does not place a blanket prohibition on working with any particular organization or industry, and this includes organizations contributing to fossil fuel extraction and distribution. Global energy companies, some of which are among the largest corporations in the world, are precisely the organizations that must undergo dramatic change in order avert future disaster for the planet and the humans that live on it. When fossil fuel extraction is already occurring, we will work to mitigate, prevent, or override the environmental impacts. When working with energy companies, Azavea will seek to take on projects that help reduce their environmental impact and, in so doing, also work to support changemakers within these organizations that share our values and beliefs.

Case studies

While many decisions are clear-cut, this Guidelines document is aimed at helping us think through the tougher decisions in which we clearly meet some of our criteria but perhaps not others, or where the technology we build may have multiple uses. In addition, the impact of any given project can rarely be fully known at the outset. We’re often taking a risk, and sometimes we make the wrong decision. Further, it’s often easier to reason with examples than with a series of abstract principles. So the Guidelines document also includes a couple of dozen case studies that describe situations that represent some of the more difficult decisions over the past several years. Some of these are projects that we turned down. Some are projects we agreed to do, and I think we made good decisions. Others are ones where we got it wrong. Each case study includes some background and a description of how the decision was made. Where relevant, I’ve also written a retrospective indicating whether or not I think we made the right decision and why. To be clear, this is from my perspective as the CEO. It is unlikely that we’ll all agree on every decision, and while I’m pretty interested in having a discussion about the tough calls, the final decision usually rests with me or one of the other executives.

Because there is a lot of customer information in these case studies, I’m not going to go into detail about any of them here, but I’d like to at least address some representative questions in order to give a sense of how we apply the framework.

Will Azavea work on fracking projects?

Maybe. It’s complicated. We have turned down at least two large projects for private firms aimed at carrying out or increasing fracking at the expense of local water supplies and the nearby communities. But we did develop a proposal for a watershed authority that was interested in developing a software-driven scoring system for assessing ecosystem impact aimed at reducing the impact of fracking on ecosystems, habitats, and water. As mentioned above, we won’t help an energy company site a new pipeline or well pad, but we might help build tools to reduce spills and environmental damage on a pipeline that already exists. And if a big petroleum company is also building windmills and solar arrays, we would be willing to take on work that supports those renewable energy efforts. Key questions: We only have one planet; what is the intent of the work, and what are the likely outcomes?

Will Azavea work on defense projects? What about intelligence agencies?

Maybe. It’s complicated. The U.S. defense and intelligence agencies represent the largest expenditures on geospatial technology in the world, and Azavea mostly does not pursue it. Some of my colleagues believe that we should never work with the Defense Department. I disagree. The DoD’s Army Corps of Engineers builds and maintains much of the national civil water infrastructure, and I do not think it violates the company’s mission to work with the Army Corps on water infrastructure. The DoD operates bases and an enormous amount of land. That land has to be managed, it is habitat for endangered species, the bases affect the economic development of the nearby communities, and so on. The DoD engages in health research, humanitarian relief operations, and data collection that is then made available to the public. Further, I believe that we need the highest quality, most effective military that we can afford. Our military and intelligence agencies play important roles in the world, and while I frequently disagree with the way we as a nation have used our military and intelligence capabilities, as Jen Pahlka recently pointed out, dull knives are dangerous.

So how does Azavea handle these questions? Well, we start by assuming that no organization is inherently bad in all of its aspects. We work on civil water infrastructure projects for the Army Corps of Engineers, and we have developed an analysis tool for evaluating the economic impact of military bases in the United States. One of our clear principles is: “we do not work on weapons systems, warfighting, or activities that will violate privacy, human rights, or civil liberties”. However, it’s a tougher decision when the funds are coming from DoD, but it’s not clear exactly how the work will be used. For example, we have accepted contracts that are funded by DoD to support improvements to our open source GeoTrellis library, which is used for doing large scale data processing and analysis with satellite and aerial imagery. It’s an open source library, so we cannot control how its used by others. And while Azavea funded much of the early development of GeoTrellis, it seems sanctimonious to declare that we’ll walk away from funding that would improve a general purpose open source library. So on this type of contract, we have set some boundaries for the prime contractor (we are a subcontractor under another firm):

- All of our work will be released under an open source license and made available to anyone that wants to use it

- We will not work with data or deploy to environments that require a security clearance, and none of our staff will be expected to obtain a security clearance

- For a given application or feature we develop, there must be a clear civilian or humanitarian use; we will not build features or applications where the primary purpose is warfighting or surveillance

Key questions: If the intent and outcomes are unclear or outside our control, can we set boundaries that will minimize the risk that our work will support activities we would otherwise oppose? Can we ensure that there are clear civilian and humanitarian applications or a clear public benefit that will arise from our work?

Politics and project selection

As outlined in a blog post I wrote in 2017, I am not afraid to put Azavea’s name behind a political position when I believe the question at stake directly affects the company or has an impact on the fundamental principles of democracy. However, I have generally tried to do so in a way that reflects a nonpartisan stance.

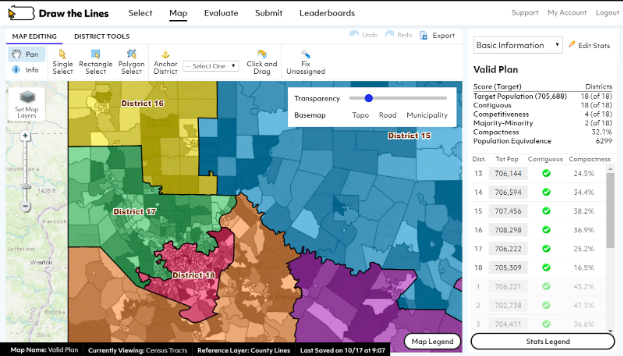

For example, Azavea is deeply engaged in legislative redistricting, but we have approached it from the perspective of reinforcing effective representative democracy and the public interest, and we have avoided supporting party-driven redistricting efforts.

We also build and support a product, Cicero, that supports citizen advocacy and lobbying efforts by identifying the elected officials that represent a given location and the various ways of contacting that official. We have attempted to maintain a nonpartisan stance with respect to Cicero customers. This is relatively rare in the current political industrial complex, where almost every product and service is aligned with one of the two political parties, but I believe it’s the right decision for us.

Some of my colleagues don’t agree and have said so. “Why are we providing services to XYZ organization? I am morally opposed to their position.” Often, at a personal level, I agree with the colleague raising the concern. But I also believe that the current party-driven political industrial complex is generating a toxic stew of anger and resentment that is doing significant damage to our democratic norms and institutions while failing to serve most citizens. Azavea is providing a service that enables citizens to more easily contact their elected representative, and I believe efforts to support representative democracy should trump party affiliation. For the (thankfully) hypothetical situation in which a customer might use Cicero to threaten or harass someone or carry out an activity that violates basic human rights or civil liberties, our product terms and conditions define these as unacceptable uses, and we reserve the right to terminate their access to the service.

Questions we haven’t answered yet

The Guidelines also include a section on questions that remain up in the air. For example, while we aim for a Hippocratic “first, do no harm” principle, there are going to be situations in which that’s impossible. Under these circumstances, should we implement a type of “harm offset”? For example, if we take on a client for which we expect has the potential for indirect harm, should we set aside a portion of the revenue derived from that work and make a donation to a nonprofit organization that attempts to mitigate or offset the harm? It’s not clear that this is a good idea. On the one hand, it’s a nice gesture, but, on the other hand, it seems to raise the risk of a “moral hazard”, a situation in which it becomes okay to commit a wrong deed because it can be expiated by some compensating action. Still, it has become a common practice to mitigate carbon and other greenhouse gas emissions by contributing to reforestation efforts. Should we do this? Under what circumstances would it be ok? We haven’t answered these questions.

New norms

Humans have probably been debating and disagreeing about the alignment of group values with individual values for as long as we’ve had language. But I believe these recent stories about some of our large technology corporations suggest a shift in cultural norms away from internal debate and towards public petition.

I feel incredibly fortunate that Azavea has had the luxury of being selective about which projects we work on while still being able to grow and thrive. I’ve described a bunch of questions we ask about each project, some principles we apply to guide our decisions, and some examples of how we’ve applied them. It’s important to note that no single principle is a litmus test. The world is often messy and unclear, and people aren’t always straightforward about their intent and desired outcomes. The principles I’ve described are not a coherent ethical system; they will sometimes contradict each other and are subject to a great deal of interpretation. They also won’t cover every situation. But they have been well received internally at Azavea, and I think they give us some measure of confidence as we grapple with future decisions.

We usually think of democracy as applying to our government, but many of the basic activities and structures of democracy also apply to the organizations in which we work as well. Most U.S. corporations are operated for the sake of their shareholders, who (at least conceptually) elect the Board of Directors, which, in turn, appoints the executives. But the primacy of shareholders has distorted the priorities of many of these organizations.

Azavea is one of these for-profit corporations, but we’re also a certified B Corporation. The idea behind B Corporations is based on the principle that businesses should serve the interest of a broader group of stakeholders, and I believe employees should be a key constituency in most organizations.

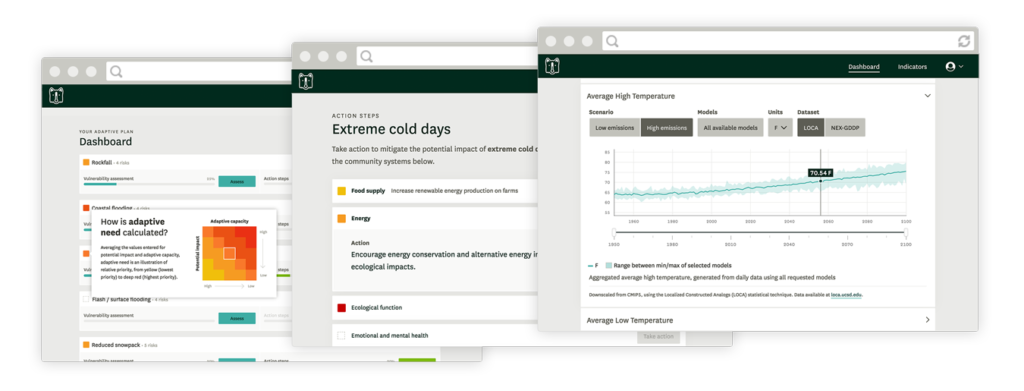

I therefore think the questions tech workers are raising with their employers are both legitimate and encouraging. They suggest a shift in the culture that reflects a significant desire for people to work in organizations that reflect their values. One interesting outcome of the Project Maven controversy at Google is a public set of principles for guiding their future work on AI projects. Other organizations have a signed a pledge to not work on AI-based weaponry. The Omidyar Network and the Institute for the Future have developed the Ethical OS, a toolkit for evaluating the potential harms of new technology.

These are encouraging outcomes to the controversy and have the potential to evolve into new norms that will manage future research and technology development. We need more of this. What can you do to contribute to new norms of behavior for how we use technology? Does your software development and data science work incorporate ethical considerations when you build it?