A lot has changed over the past two years. We are now in the midst of multiple planetary-scale crises – COVID, climate change, poverty, food, security, democracy, among others. I believe that the urgency of these conditions requires similarly urgent action by individuals, organizations, and governments, and I want to see Azavea contribute to addressing them. Some key elements of our approach include:

- Focus on climate, water, and sustainability – Azavea builds software that addresses challenges in many domains including: legislative redistricting in democracies, apparel supply chains, urban forestry, and road safety. We’re continuing to make contributions in these areas, but we are focusing most of our proactive business development and marketing efforts on urgent issues of climate change, water, and building sustainable infrastructure that will enable the prosperous societies of the future.

- Invest in geospatial machine learning – A key way to address planetary-scale crises will be better measurement of what’s happening. The multiple, global constellations of satellite, aerial, and ground sensors are generating avalanches of data that are set to grow further. Machine automation is the only feasible way to collectively leverage the data we are gathering in order to help us make better decisions.

- Get comfortable with processing and visualizing data at a planetary scale – Azavea began as a local, Philadelphia company, and I remain proud of the work that we’ve done at this level, but almost all of our work today is national or international, and many of our customers are addressing concerns at a global scale. Our investments in knowledge and tooling are enabling us to work with global datasets in a range of different use cases.

- Planetary-scale challenges will often require a lot of computing power – Azavea was an early adopter of Amazon Web Services, and today almost everything we build is aimed at cloud infrastructure with AWS being the most common destination.

- Open knowledge ecosystems are the bedrock of innovation – Open source software has been the bedrock around which both the general internet and cloud infrastructure have been built. Alongside open source, open data and open standards provide the scaffolding that I believe enables both a powerful innovation ecosystem and a level playing field for which individuals and businesses can operate.

- Creating great user experiences is critical to our success – We may be leveraging networks of machines to process planetary-scale data, but the end-users of our work remain humans trying to understand their world and make better decisions about how they live. The design of high-quality software user experiences (UX) is a central pillar of how we operate, and we have a dedicated team of UX professionals.

With that framing in mind, what about the trends? Forecasting is hard, so I’m going to try to ground most of my comments in personal experiences and highlight some developments I expect will happen and some that may represent wishful thinking.

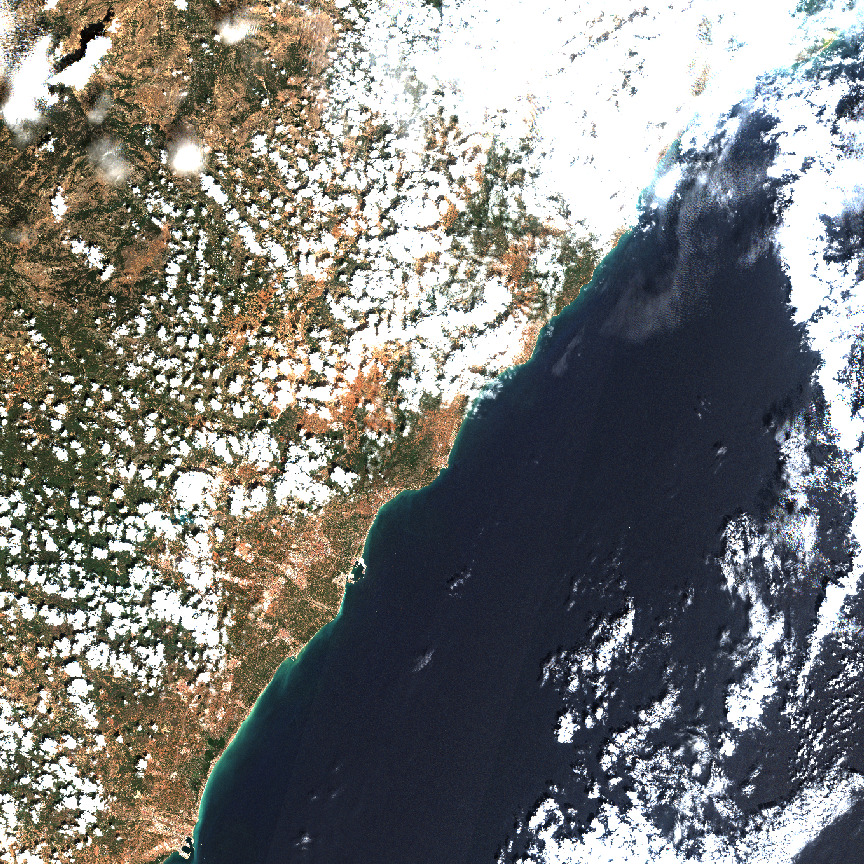

1. Earth observation satellites will continue to proliferate and expand our view of the planet while generating a growing avalanche of data.

Planet Labs transitioned from being an investor-funded private company to being a publicly-traded firm in early December. This is a big deal on a couple of fronts. It provides an Earth observation firm with more than 200 satellites (and 44 more just launched) the capital it will need to continue growing and expanding its impact. I am also inspired by their mission: “to use space to help life on Earth, by imaging the whole world every day and making global change visible, accessible, and actionable.” And I don’t think this mission is window dressing – when Planet went public in December, they did so as a Public Benefit Corporation, a legal structure that enables them to pursue a purpose beyond simply generating profits. While I don’t frankly think there will be many other satellite companies following that lead, there is a lot of investor capital that went into Earth observation startups over the past two years. While I think the New Space SPAC splurge is winding down, there is a lot of money sloshing around in this ecosystem, and it’s likely to mean a continued rapid expansion in the number of sensors in orbit. This was the key insight Azavea had in 2012 when we released the first version of our GeoTrellis framework and it has guided our other investments in open source imagery processing technology, like Raster Vision, Franklin, and GroundWork. Many of these new firms will not survive, but the new Earth sensing capabilities will be critical to responding to many of the urgent challenges we face. Azavea is continuing to engage in research, including a project with NASA to develop tools for hyperspectral imagery and an effort funded by NOAA to use Earth observation imagery for mapping water features.

2. Climate-specific Earth observation satellites will grow in number.

NASA, ESA, JAXA, and other national space agencies are investing in launching new climate-monitoring satellites, but these government efforts tend to be big, long-term projects, and we’re also going to need the agility and speed of the private sector to enrich these efforts. The recently announced Carbon Mapper project is a collaboration between private firms, nonprofit organizations, and the Jet Propulsion Laboratory (JPL) to launch and operate a pair of hyperspectral imaging satellites that will complement ongoing government investments by providing “comprehensive, accurate, and timely measurement of methane, carbon dioxide, and 25+ other environmental indicators that are needed to closely monitor the health of the planet”. These new satellites will be developed in 2022 and are expected to launch in 2023, and the data will be released under an open license. We can’t fix what we can’t measure, and these new instruments will help us get the measurements we need. It’s tough for me to say for sure, but Azavea’s ongoing R&D project with NASA to develop new tools for working with hyperspectral data may enable us to leverage this data once it’s available.

3. Geospatial machine learning will continue to advance, but we need more open training data.

Azavea has been investing in geospatial machine learning, particularly with Earth observation imagery, for the past several years and our various client engagements have given us insight into some of the most common challenges to using AI tools with geospatial imagery. I believe “training data” is one key area of concern. You’ve likely read a lot about AI and machine learning over the past several years. While the concepts stretch back half a century, a great deal of rapid progress has been made in recent years, and I attribute this to a few key factors:

- Availability of scalable computing storage and infrastructure – Amazon Web Services, Azure, Google Cloud Platform, and other cloud infrastructure has made the execution of large, variable computational workloads feasible without investment in a lot of your own hardware.

- Open-source software tools – most of the common machine learning frameworks (PyTorch, TensorFlow, FastAI, Keras, MxNet, etc.), including Azavea’s own Raster Vision effort, are available under open licenses that enable broad re-use. This substrate of shared ideas accelerates developing and learning by a global community while still enabling commercial products and services to be built on top.

- Openly licensed training data – Machine learning models “learn” by looking at lots of examples and then developing a statistical model of, for example, what a “cat” looks like in a photo. We’ve all enjoyed the fruits of the machine learning revolution; we’ve got dramatically better phone cameras and photo manipulation software, language translators, and a variety of other applications. But the somewhat under-celebrated heroes of this progress have been the free databases of examples that can be used to “train” the learning models. For photography, it has been the ImageNet database: 14 million photos and labels of what’s in each photo. For language translation, there’s CommonCrawl, WikiMatrix, and the CCMatrix databases.

For the geospatial world, I’d like to focus on this last item. There is a lot of machine learning work being done by private and university research projects using Earth observation imagery. This is important progress, but there is not yet a shared and openly licensed database of geospatial training data equivalent to ImageNet. This is not a new observation. A few years ago InQtel and their Cosmiq Works project worked hard to develop SpaceNet by hosting a series of public competitions but it was limited in scope and coverage. A more recent effort, the Radiant MLHub, aims to build a shared global resource of training data. And we’re putting our money where our mouth is: in 2021, Azavea released a cloud (the water vapor kind, not the computing kind) training data set using Sentinel-2 imagery and released in 2021. This is incredibly important work, and I’d like to see efforts like MLHub grow significantly in 2022.

4. OpenStreetMap will continue to grow through a combination of volunteer and private firms.

I have a lot to say about OpenStreetMap, but my summary in this context is that I think it remains a key model for building a collectively owned and publicly available map of the built environment. It’s amazing what has been accomplished, but, as I noted above, most of the human-built environment remains unmapped, and a lot of work remains to be done. In recent years, there have been significant and growing investments in labor and technology from private firms, like Facebook/Meta, Microsoft, Apple, Mapbox, and Amazon, while volunteer mappers continue to make enormous contributions as well. I think this is all going to continue to grow as more people find utility in this type of shared data infrastructure. I think it’s also important to note that some of the investment by private firms is because the license terms and costs for using Google Maps necessitate some kind of alternative geospatial data infrastructure. Facebook/Meta and Microsoft, in particular, are applying the latest machine learning innovations to accelerate improvements in data coverage and quality at a global scale.

5. Data Trusts will get off the ground.

Last year, I joined the advisory board of a new organization, PLACE, a non-profit building a “data trust” for mapping data, particularly for locations that are not well mapped. Above, I described OpenStreetMap as public data infrastructure with an open license that supports a broad range of uses. I also referenced the Google Maps product. Google gathers or licenses all of its own data and packages this into a commercial license that both enables them to collect a fee for usage as well as limit the ways in which it’s used (for example, bizarrely, you can’t use the Street View feature for mapping trees). A data trust is one option that falls between these two poles. Based on the ideas of Elinor Ostrum and others, a data trust aims to establish a “data as a commons” structure whereby stakeholder access is balanced against sustainability of the commons (thereby evading a “tragedy of the commons” phenomenon). An independent Board of Trustees oversees governance of the trust and ensures that equitable rules are applied to all of the participants. PLACES’s mission is to map the urban world with high-resolution imagery and make the resulting maps open, reliable, and accessible by placing them in a perpetual trust that operates in the public interest. PLACE partners with governments to collect geospatial data and they transfer all of the rights and IP to the government entity. The government entity then, in turn, provides PLACE with an irrevocable, non-exclusive, perpetual license to host, maintain and distribute a copy of the data to its members. Members pay membership dues and agree to membership terms and conditions. PLACE uses member fees and other funding to operate the Trust and pay for local data collections. In reality, this is tricky. How much should members pay? What terms should members agree to when they join the trust? Who should be allowed as a member? How should data collection by local businesses be incentivized? These and many other questions need to be answered, and there aren’t many data trusts to use as examples and none that focus on geospatial data. If successful, it could accelerate development and availability of mapped geospatial data in the very places that are least mapped today.

6. Location and mobility data collection will remain fraught.

Like many of you, I downloaded and installed a contact tracing application provided by my state (the Commonwealth of Pennsylvania) and made possible by a collaboration between Google and Apple. By this time, most of us know how mobile telecom carriers and mobile OS developers (Google and Apple) have unprecedented access to data about how we move around in our communities, and over the past two years, we both discovered new benefits and new abuses of this data. When I downloaded that contact tracing app, I was engaging in participatory self-surveillance, but I judged that despite the privacy concerns, that collective data had significant public value and would help officials understand how the COVID-19 pandemic was spreading. But, aside from COVID, we’ve also learned how mobility data is being sold, re-sold, and used for surveillance purposes. More than 40 companies now make up a $10 billion industry that collects, buys, sells, and aggregates this data. While Apple and Google have banned app developers from using location SDKs from some of these data companies, these bans are already being evaded, and I expect this will be a running battle between the mobile OS providers and the growing location data industry. I think there is both a clear opportunity for government action to regulate collection and use of this data to create a public good by enabling the aggregation and sharing of mobility data in a responsible manner. A recent report from The Data Economy Lab suggests some potential paths forward:

- Develop new data stewardship models, like “data trusts” (more on this below)

- Develop dynamic regulation that builds guardrails around the collection, use, and sharing of location data

- Build collaborative, privacy-by-design ecosystem for exchange of critical data sharing (like for public health)

- Build the capacity of communities and civil society organizations to collect and control location and mobility data related to health

What Won’t Change

I’ve outlined some of the trends that I see for the coming year, but I think it’s also helpful to think about what likely won’t change in the next year (and probably for many years beyond):

- Geospatial, place, and location will continue to matter

- User Experience will continue to matter

- People will want to do meaningful work that contributes to something larger than themselves

- People will want to express themselves in new and innovative ways

Closing

The last couple of years have been tough for many of us. Currently, in the depths of the omicron variant, it’s sometimes hard to see the bright side of things. It’s easy to forget that the Renaissance was preceded by the Bubonic Plague and the Roaring ’20s were preceded by the Spanish Flu. At the beginning of 2022, I am heartened. I believe we are in the midst of an awakening at a planetary scale – not only are we more aware of human activity through the internet, we are deploying sensors in the air, on the ground, in the ocean, in orbit, that enable us to know more and take more effective action. The marketing guru, Seth Godin, recently wrote one of his short articles on “generative hobbies”, which he describes as endeavors that involve community, leadership, and creation of public goods. While Azavea rarely feels like a hobby, I continue to be inspired by our mission, the communities we contribute to, the leadership we demonstrate, and the public goods that we have a chance to build with our partners and clients. I’m looking forward to the coming year.